The Platform Team Anti-Patterns We Learned the Expensive Way

Platform engineering has become the default answer to developer productivity problems. Build an internal developer platform, the thinking goes, and your application teams will ship faster, your infrastructure will become more consistent, and your operations costs will drop.

Sometimes that’s exactly what happens. More often, we’ve watched platform teams become expensive bottlenecks that slow down the very engineers they were meant to help.

After years of building platform infrastructure for companies ranging from seed-stage startups to enterprises, we’ve compiled the anti-patterns that consistently predict platform team failure. These aren’t theoretical concerns—they’re patterns we’ve seen destroy six-figure monthly budgets and set engineering organizations back by quarters.

The Golden Path That Nobody Walks

The most seductive platform team failure mode is building beautiful infrastructure that solves imaginary problems.

It typically starts innocently. The platform team surveys application developers, collects feature requests, and builds a prioritized roadmap. They ship a polished internal developer portal, a sophisticated CI/CD pipeline, and a Kubernetes abstraction layer that handles 90% of use cases.

Then adoption stalls at 15%.

The problem is that surveys and feature requests measure what developers say they want, not what they actually need. Engineers are notoriously bad at predicting their own bottlenecks. They’ll ask for faster builds when the real problem is unclear deployment processes. They’ll request more compute when the actual issue is poor observability making debugging painfully slow.

We’ve learned to measure adoption through observation, not surveys. Watch where engineers spend time waiting. Count the Slack messages asking “how do I deploy X?” Track the workarounds that proliferate outside official tooling.

One client had invested three months building a sophisticated feature flag system because their surveys showed high demand. Actual usage data revealed that developers needed better secrets management—they’d been hardcoding credentials because the existing solution required seven clicks and a 30-second page load.

The fix: Instrument your existing workflow before building new abstractions. Shadow sessions beat surveys. Usage analytics beat roadmap votes.

The Abstraction That Leaks at Scale

Platform teams often create abstractions that work beautifully at small scale and collapse under production pressure.

The canonical example is the Kubernetes wrapper that “simplifies” deployment. Your platform team builds a clean YAML interface with sensible defaults. Developers don’t need to understand pods, services, or ingress—they just specify a few high-level parameters and the platform handles everything.

This works until it doesn’t. An application needs custom resource limits. Another requires a specific scheduling affinity. A third needs to mount a persistent volume in an unusual configuration. Suddenly developers are either waiting weeks for platform team support or learning Kubernetes anyway to work around the abstraction.

We call this the “ceiling problem.” Every abstraction has a ceiling where it stops being helpful and starts blocking progress. Good abstractions have escape hatches. Bad abstractions force you to either wait for platform team changes or abandon the platform entirely.

The most successful platform investments we’ve seen expose progressive complexity rather than hiding it. Sensible defaults for the 80% case, clear documentation for the 15% edge cases, and explicit escape hatches for the 5% that genuinely need raw access.

The fix: Design for the failure mode, not the happy path. Every abstraction should include a documented “how to work around this when it doesn’t fit” section.

The Governance Theater

Some platform teams confuse process with progress.

They implement approval workflows for infrastructure changes. They create tickets for every environment request. They establish architecture review boards that meet biweekly. Each individual process seems reasonable in isolation.

Combined, they create a bureaucratic tax that turns two-hour tasks into two-week ordeals.

We worked with one team where provisioning a new staging environment required: a ticket in ServiceNow, approval from an architect, a security review, a cost approval from finance, and a slot in the weekly change advisory board meeting. Average time from request to provisioned environment: 23 days.

Developers responded predictably. They kept environments running indefinitely rather than spinning up fresh ones. They shared credentials to avoid provisioning personal access. They ran production tests against staging because creating a proper test environment took too long.

The governance designed to reduce risk actually increased it.

The alternative isn’t zero governance—it’s governance that scales with risk. Self-service provisioning for non-production resources with automatic cleanup. Lightweight review for production-adjacent changes. Full review process only for changes that can actually cause significant damage.

The fix: Measure governance by outcome, not process compliance. Track time-to-developer-productive, not number-of-approvals-collected.

The Platform Team as Permission Gate

This anti-pattern is subtle because it often feels like doing the right thing.

Platform teams accumulate responsibilities: infrastructure provisioning, security scanning, compliance checking, cost optimization, reliability engineering. Each responsibility seems like a reasonable centralization.

But centralization creates queues, and queues create wait times, and wait times create frustration, and frustration creates workarounds.

The platform team becomes a chokepoint not because they’re slow or incompetent, but because they’re doing too many things that could be distributed. When every infrastructure request flows through the same team, that team becomes the constraint on organizational throughput—regardless of how capable they are.

The healthiest platform teams we’ve seen operate as enablers rather than gatekeepers. They build tooling that lets application teams self-serve within guardrails. They focus on automation that eliminates the need for manual review rather than optimizing the review process.

One useful heuristic: if your platform team is frequently asked “can you do X for us?”, the platform isn’t a platform—it’s a service team with a misleading name. Platforms enable; service teams execute.

The fix: Shift metrics from tickets-completed to self-service-rate. The goal is reducing the number of requests that require human platform team involvement.

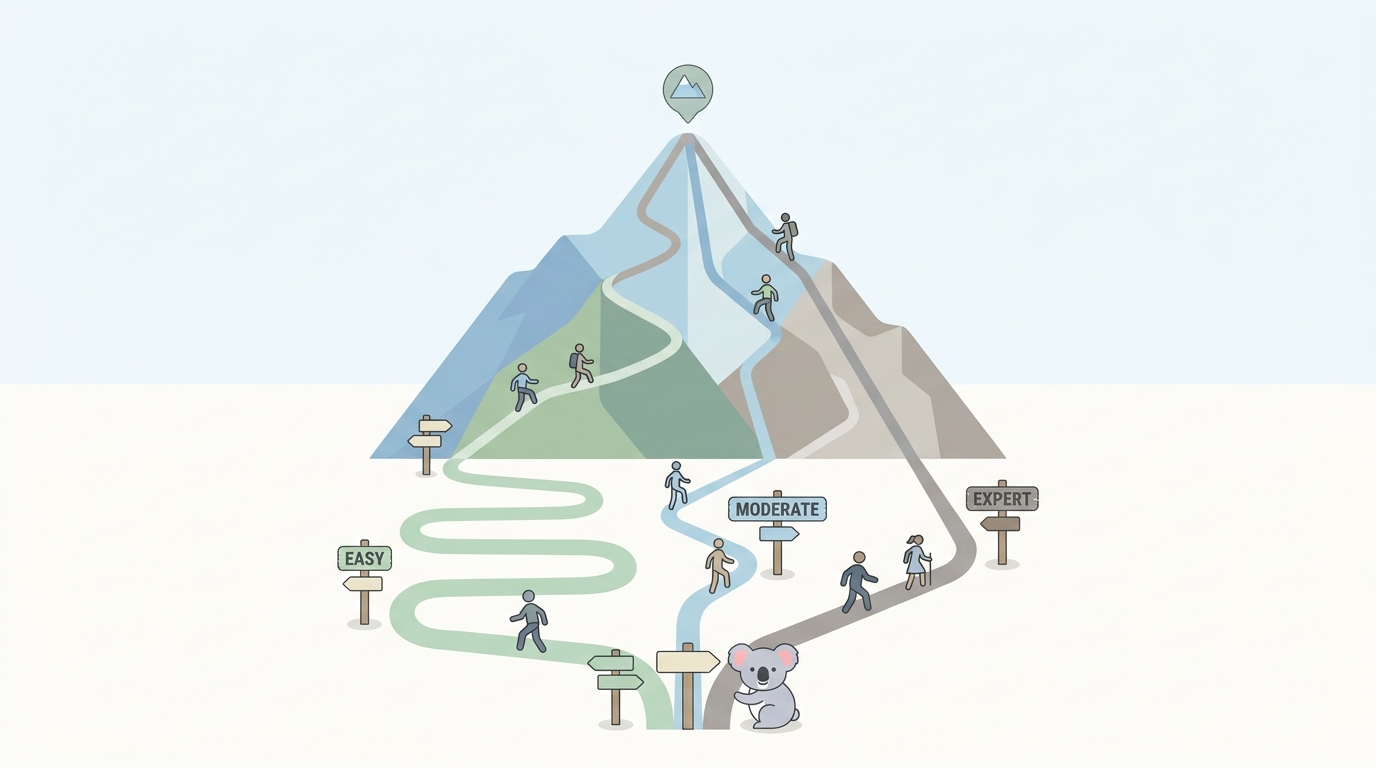

The Premature Platform Investment

Perhaps the most expensive anti-pattern is building platform infrastructure before you have enough application teams to justify it.

Platform teams have fixed costs: salaries, tooling, infrastructure for the platform itself. Those costs need to be amortized across enough consumers to make economic sense. A platform team of four engineers making $200K each needs to generate $800K+ in annual value just to break even.

For a company with three application teams, that means each team needs to receive $250K+ in annual productivity gains. That’s a high bar—roughly equivalent to eliminating one senior engineer from each team or cutting deployment time by 80%.

We’ve seen startups with 20 engineers invest in dedicated platform teams, only to realize months later that the coordination cost of having a separate team exceeded the productivity benefits they delivered. The engineers would have been more valuable embedded in product teams, solving infrastructure problems as they emerged.

The inflection point varies, but we generally see platform teams become cost-effective somewhere between 50-100 engineers, or when you have 6+ application teams with sufficiently similar infrastructure needs that centralized tooling pays off.

The fix: Start with a virtual platform team—engineers who spend part-time on shared infrastructure while remaining embedded in product teams. Formalize into a dedicated team only when coordination overhead justifies it.

Building Platforms That Work

The platform teams that succeed share a few characteristics: they measure adoption obsessively, they build escape hatches into every abstraction, they minimize approval gates, and they optimize for self-service rather than ticket completion.

Most importantly, they maintain awareness that their job is to accelerate application teams, not to build impressive infrastructure. The platform is never the product. The platform is successful only when you can barely tell it exists—when application teams move fast and don’t think much about the tooling underneath.

If you’re evaluating whether to build a platform team, or wondering why your existing platform investment isn’t delivering expected returns, start by auditing against these anti-patterns. The problems are usually obvious once you know what to look for.

At Koalabs, we’ve helped teams diagnose platform dysfunction and build infrastructure that actually accelerates delivery. If you’re wrestling with any of these patterns, we’re happy to share what we’ve learned.

When your platform team ignores best practices for shiny new tools.